Do Genetically Smarter Populations Climb the Civilization Ladder Earlier?

A quantitative analysis of ancient DNA and archaeological stage

Ancient DNA contains a surprise. When researchers extract polygenic scores linked to educational attainment from prehistoric skeletons, the scores rise through time. Hunter-gatherers score lower than early farmers, early farmers lower than Bronze Age populations, and so on. The standard interpretation is that this reflects something about the passage of time itself such as selection, migration, demographic turnover, or some mixture of all three.

But time is not the only thing changing across that span. Social and technological complexity are changing too. A Paleolithic forager band and an Iron Age kingdom are not just separated by millennia. They are separated by farming, writing, cities, specialization, storage, hierarchy, and the entire accumulated weight of what we loosely call civilization. So here is the question worth asking: when those polygenic scores rise through ancient history, are we really just watching a clock tick or are we watching something track the emergence of more complex ways of organizing human life?

Using ancient individuals from the AADR dataset, I assigned each archaeological period a civilization-stage score and asked whether that score predicts educational-attainment polygenic scores even after controlling for absolute date. In practice, that meant coding Paleo-Mesolithic groups as 1, Neolithic groups as 2, Copper Age groups as 3, Bronze Age groups as 4, and Iron Age groups as 5.

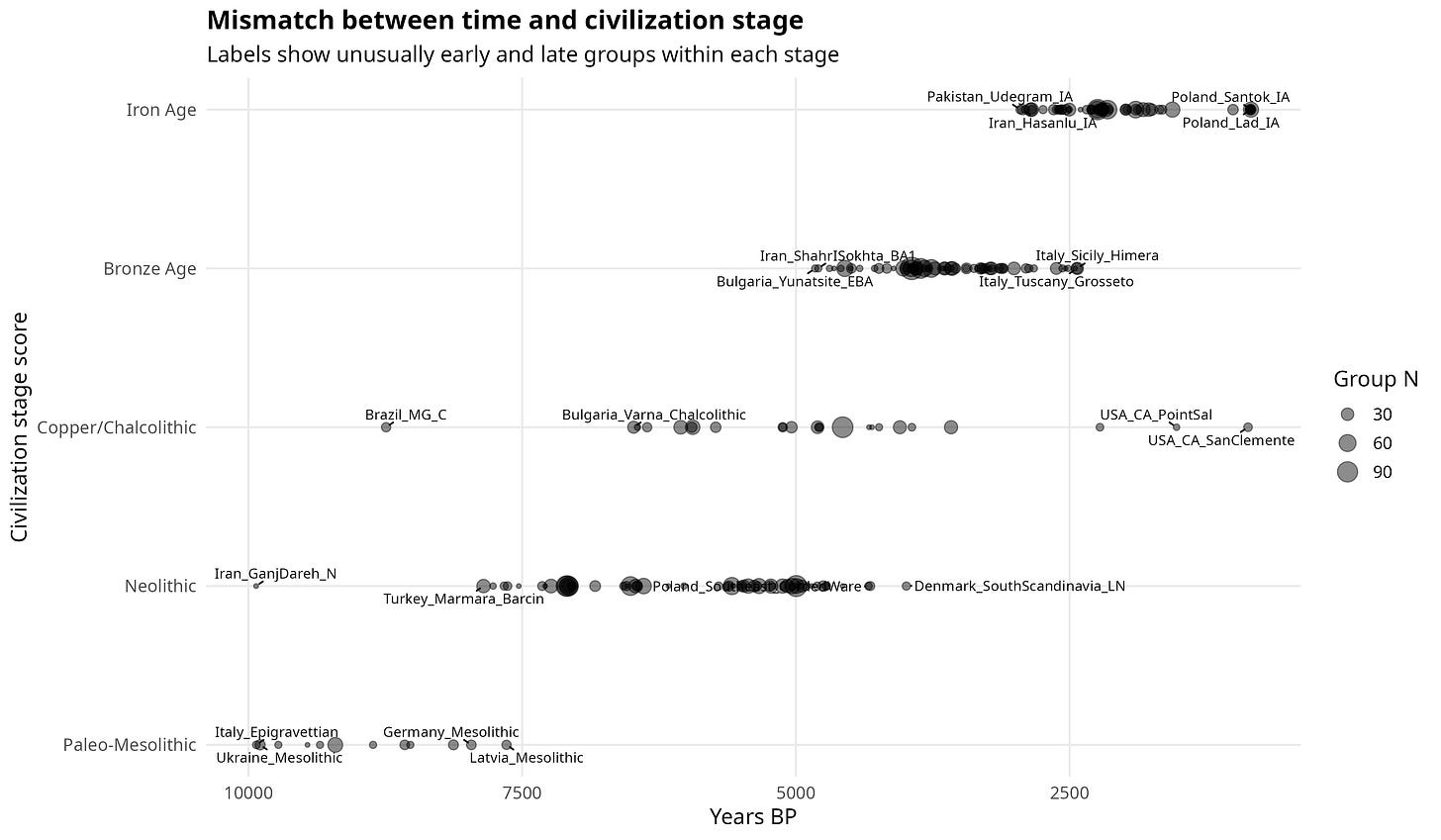

The leverage comes from a basic fact of world prehistory: civilization and chronology do not move in lockstep. Greece had farming millennia before Britain. Some populations at the same date occupied very different social worlds. That mismatch is what makes it possible to pull the two apart and ask which one is actually doing the work.

A time trend by itself could reflect selection, migration, demography, drift, or some mixture of all of them. The harder question is whether the relevant axis is not just time, but civilization.

If you take two ancient populations from different social worlds, but do not let chronology do all the explanatory work, does their place on the civilizational ladder still matter?

The modern world has made one thing hard to miss. Cognitive performance, schooling, institutional complexity, and economic development cluster together. But once we move back into deep history, the picture becomes much hazier. We still know remarkably little about whether the genetic variants associated with educational attainment track not just the passage of time, but the emergence of more complex forms of social organization.

Gregory Clark (2007) argued that preindustrial societies may have undergone differential reproduction in ways that slowly shifted the distribution of traits favorable to economic success. Galor and Moav (2002) built a related idea into a formal evolutionary growth framework. In their model, the Malthusian world did not simply hold humanity down. It also created selection pressures that gradually favored traits complementary to human capital, technology, and later economic takeoff. On that view, the Industrial Revolution was not a bolt from the blue. It was the late visible phase of a much longer process.

I'm not testing their mechanism directly but the question points in the same direction. If traits linked to educational attainment were historically relevant to the capacity of populations to sustain more complex forms of life, then archaeological stage should retain some predictive power even after absolute time is held constant. In that sense, the civilization-stage score is crude, but it is also useful. It is a rough proxy for where a population stood in the long transition from simple subsistence regimes to more knowledge-intensive and organizationally demanding societies.

At any given date, some populations were still living in relatively simple social worlds while others had already crossed into much more complex ones. And populations grouped into the same broad archaeological stage could be separated by very large spans of time.

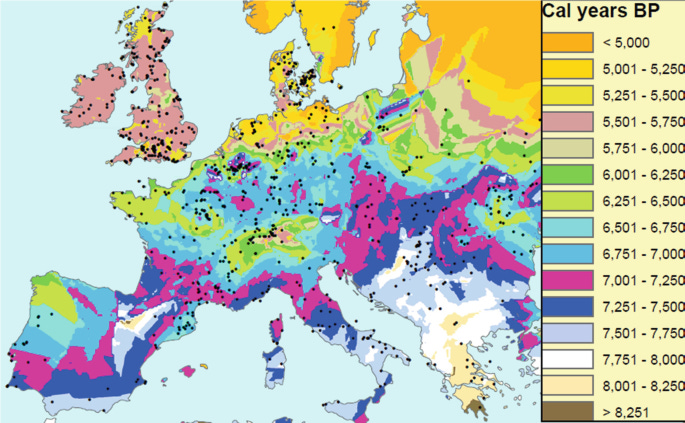

A map of the spread of agriculture makes the point immediately. The transition to farming did not unfold at a uniform pace across Europe. Some regions crossed into the Neolithic much earlier than others, while large parts of the continent remained outside it for centuries or millennia longer. That staggered transition is exactly what makes the present analysis possible. If different populations occupied different civilizational stages at the same broad date, then archaeological stage and chronology can, at least in principle, be pulled apart.

The sample is global in scope, not limited to Europe, which provides the statistical power needed to detect meaningful mismatches.

That mismatch is what makes the whole exercise possible. Once we exploit the fact that date and stage are misaligned, we can ask the real question: when educational-attainment polygenic scores rise through ancient time, are we just looking at chronology, or are we also looking at civilization?

How I tested it

I used ancient individuals from the public AADR 1240k dataset and grouped them into five broad archaeological stages, from Paleo-Mesolithic to Iron Age. These were coded from 1 to 5 as a rough ordinal measure of civilizational complexity.

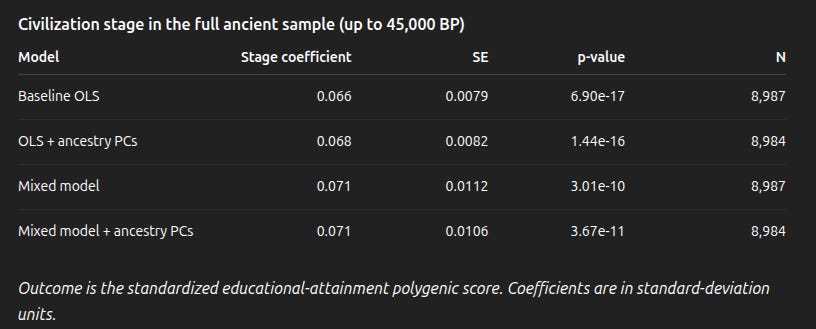

The key outcome was each individual’s educational-attainment polygenic score, derived from ancient DNA. I then asked a simple question: does civilization stage still predict that score once absolute date is held constant?

To test this, I ran models on both the full 45,000-year sample and the Holocene alone, controlling for date, genomic quality, geography, and, in the main specifications, ancestry. That last step matters because it reduces the risk of mistaking large-scale population movements for a civilization effect.

I also reversed the analysis by treating civilization stage itself as the outcome in ordered probit models. That made it possible to ask the same question from the other direction: are individuals with higher educational-attainment polygenic scores more likely to belong to later archaeological stages, even after the same controls? If the pattern holds both ways, it is less likely to be a quirk of one model.

Time and civilization are related, but not the same thing

Archaeological stage and calendar date are correlated, but far from perfectly. Populations living at similar dates can occupy very different civilizational positions, and populations assigned to the same broad stage can be separated by long stretches of time. This can be seen in the figure below.

Mismatch Between Time and Civilization Stage

If civilization stage predicts EA-linked polygenic scores after time is held constant, then the result cannot be reduced to a simple temporal climb.

What happens in the full sample

The civilization-stage score is a clear positive predictor of educational-attainment polygenic scores even after controlling for date, coverage, and latitude.

Paid subscribers get the full coefficient plots, robustness checks, ancestry-controlled models, and my detailed interpretation of what this result does and does not mean